The American company aims to lead in artificial intelligence and various industries and to pioneer advancements. It has developed a prototype capable of commanding humanoid robots.

Nvidia, the renowned chipmaker, has further solidified its position in artificial intelligence by introducing a groundbreaking “super chip,” venturing into quantum computing services, and releasing a suite of tools aimed at advancing the creation of general-purpose humanoid robotics, a concept reminiscent of sci-fi dreams. This exploration delves into Nvidia’s recent endeavours and contemplates their potential implications.

Nvidia Technological Frontiers: A Closer Look

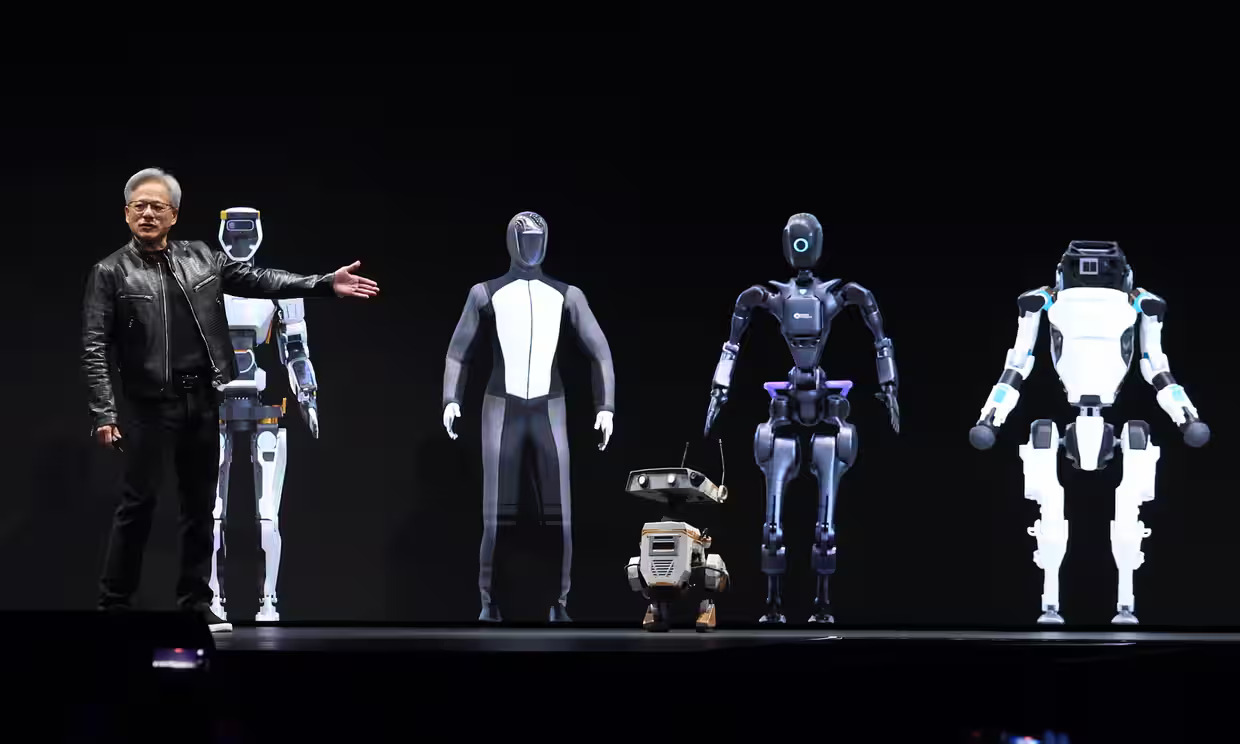

The primary highlight of the company’s annual developer conference on Monday was introducing the “Blackwell” series of AI chips. These chips are designed to fuel the immensely costly data centres responsible for training cutting-edge AI models, including the latest iterations of GPT, Claude, and Gemini.

Firstly, the Blackwell B200 represents a relatively simple enhancement compared to the company’s existing H100 AI chip. According to Nvidia, training an extensive AI model equivalent to the scale of GPT-4 currently requires approximately 8,000 H100 chips and consumes 15 megawatts of power. This power consumption equals enough energy to supply approximately 30,000 typical British households.

Utilising the company’s latest chips, the identical training process would now necessitate only 2,000 B200s and consume 4MW of power. This development could decrease electricity consumption within the AI sector, or alternatively, it could facilitate the utilisation of the same amount of electricity to power significantly larger AI models in the future.

Decoding the ‘Super’ in Super Chips: Unveiling the Secrets of Nvidia Advanced Computing

In addition to the B200, Nvidia unveiled another component of the Blackwell series, the GB200 “super chip.” This innovation integrates two B200 chips onto a single board alongside the company’s Grace CPU, creating a system that, according to Nvidia, delivers “30 times the performance” for server farms responsible for running, rather than training, chatbots like Claude or ChatGPT. Moreover, this system pledges to slash energy consumption up to 25 times, as per the company claims.

Integrating components onto a single board enhances efficiency by minimising communication time between chips, enabling them to allocate more processing power to executing tasks such as powering chatbots to engage in conversation.

What If Users Require Bigger Capacity?

Nvidia, boasting a market value exceeding $2 trillion (£1.6 trillion), stands ready to cater to your needs. Consider the GB200 NVL72: a single server rack featuring 72 B200 chips interconnected by nearly two miles of cabling. Still not enough? Then perhaps the DGX Superpod might catch your interest—a colossal AI data centre packed into a shipping-container-sized enclosure, amalgamating eight of these racks into one unit.

While pricing details were not revealed at the event, it’s safe to assume that it’s beyond your budget if you have to inquire about the price. Even the previous generation of chips commanded a hefty price tag of around $100,000 each.

Exploring Robotics Solutions

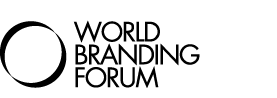

Named after Marvel’s arboreal alien, though not directly associated, Project GR00T represents a novel foundation model by Nvidia designed to oversee humanoid robots. Like GPT-4 for text or StableDiffusion for image generation, foundation models serve as the fundamental AI model upon which specialised applications can be constructed.

While they constitute the costliest aspect of the sector to develop, they serve as the driving force behind subsequent innovations, as they can be tailored or “fine-tuned” to specific use cases in the future.

Nvidia’s foundational model for robotics aims to enable them to comprehend natural language and replicate movements through observing human actions. This facilitates rapid learning of coordination, talent, and various other skills essential for navigating, adapting to, and interacting with the real world.

GR00T collaborates with another Nvidia technology, Jetson Thor, drawing another Marvel reference, to form a system-on-a-chip tailored to serve as a robot’s cognitive centre. The overarching objective is to develop an autonomous machine capable of comprehending instructions in regular human speech to execute various general tasks, even those it has yet to be specifically trained for.

Diving into Quantum Computing

Nvidia is entering the realm of quantum cloud computing, a buzzing sector in which it has not previously been heavily involved. While this technology continues to be at the forefront of research, it has already been integrated into services provided by Microsoft and Amazon. Now, Nvidia is joining the game.

However, Nvidia’s cloud service will not directly link to a quantum computer. Instead, it will offer a service that leverages its AI chips to simulate the functionality of a quantum computer. This approach aims to enable researchers to test their concepts without incurring the costs of accessing an actual quantum computer, which is both rare and expensive. Nvidia plans to expand its platform to provide access to third-party quantum computers.

Read more: AI Bias: What It Is, Types and Their Implications

As Nvidia continues to expand its presence in cutting-edge technologies like AI, robotics, and now quantum computing, the possibilities for innovation and discovery are boundless. By offering simulation services and future access to third-party quantum computers, Nvidia is poised to play a significant role in shaping the future of computing and scientific exploration. Stay tuned as Nvidia pushes the boundaries of what’s possible in artificial intelligence and beyond.